Norric AI / AI-native Enterprise Platform + B2B SaaS

The answer exists. It's just buried in last quarter's email.

Norric AI is an AI-native operating system that helps private market firms respond to institutional DDQ and RFP requests. I designed a system where AI supports speed and consistency, while humans retain ownership over judgment and risk.

Role

Lead Product Designer & AI Product Strategist

Duration

Sep – Dec 2025

Company

Norric

Collaborator

CEO, Front/Back-end Developer,

6 Product design interns

Skills

Product Shaping

IA

Interaction Design

Design System

AI-assisted Review Experience

WHAT'S A DDQ?

DDQ (Due Diligence Questionnaire) is a standardized set of questions institutional investors send to fund managers before committing capital—covering firm history, investment strategy, compliance, risk management, and more.

Think of it as a 100+ question exam that firms must complete perfectly, repeatedly, every quarter. This project was about designing boundaries, not full automation for its own sake.

MY ROLE & CONTRIBUTIONS

Product Strategy

Shaped product direction with CEO, defined core workflows and AI boundaries

Information Architecture

Designed system structure for DDQ workspace, repository, and AI agent

Interaction Design

Created response builder, matching system, and review flows

Design System

Built component library for consistent enterprise experience

AI Experience

Defined where AI helps and where humans decide

Overview

Same questions. Every quarter. Answers buried everywhere.

The questions aren't hard. 60% are identical across investors. But finding where you answered them last time? That's where teams lose hundreds of hours.

I designed Norric to make past answers instantly findable, progress always visible, and accountability crystal clear—while keeping final decisions in human hands.

PROJECT CONTEXT

Users

Investor Relations (IR) teams at PE/VC firms responding to 10-50+ DDQs per quarter

Stakeholders

Institutional investors (pension funds, endowments, sovereign wealth funds)

The Reality

60% of questions repeat; answers scattered across platforms

The Goal

One place to manage DDQs from start to finish

The Constraint

AI assists, but humans must own final decisions (legal liability)

The problem

Writing the answer takes 5 minutes. Finding the old one takes 30.

Receive DDQ

PDF arrives via email, manually parsed question by question

Find past answers

Search emails, SharePoint, Slack, ask colleagues

Draft response

Copy-paste from old docs, hope it's still accurate

Review

Email threads, file confusion, "which one is final?"

Submit

Manual export, no audit trail of who approved what

Painpoint

60% of questions repeat

Impact

Yet teams rewrite answers without access to prior versions

Painpoint

Answers scattered everywhere, Hours lost to searching

Impact

Teams rewrite the same answers without access to prior versions

Painpoint

Progress is invisible

Impact

More time hunting for old answers than writing new ones

Painpoint

Deadline collisions

Impact

Multiple DDQs due simultaneously, every quarter

WHAT'S MISSING

Solution

One workspace. Findable answers. Visible progress. Human sign-off.

I didn't redesign how investors ask. I redesigned how teams find, write, review, and submit.

Core flows

01 Drag. Drop. Searchable.

From inbox to answers in seconds.

Teams drag incoming DDQs into a single searchable repository. AI auto-matches each question to past responses, flagging exact matches, high-confidence suggestions, and gaps that need fresh answers.

02 Found by AI. Approved by you.

AI flags issues. You decide what's next.

Instead of leaving users to manually audit everything, the system highlights the issue and suggests next steps: review the document, compare with last year's submission, and update the answer.

03 Question-level ownership

The right expert on every answer.

Route each question to legal, compliance, ops, or IR — not the whole document. Everyone knows exactly what's theirs.

Research

Before solutions, I needed to understand the workflow that doesn't work.

The initial brief was "add AI to DDQ workflows." But I needed to understand: Where does the current process actually break down? What do IR teams need vs. what do they say they need?

What I Did

User Persona

Design Decisions

Why I made the choices I made

1. Why does AI suggest instead of auto-fill?

Legal liability

Firms must own their submitted responses

Context matters

Past answers may be outdated or situation-specific

Trust takes time

Users need to verify before trusting AI suggestions

-> AI surfaces past answers with confidence levels. Humans always make the final call.

2. Why confidence levels (Exact / High / Low)?

Not all matches are equal

"Exact" = same investor, same question. "High" = similar but needs checking.

Users need to calibrate trust

Knowing confidence helps them decide when to verify

-> Visual confidence badges on every match. Users learn to trust "Exact" and double-check "High."

3. Why can't AI auto-submit?

Compliance requirements

Institutional investors require human accountability

Risk management

One wrong answer can damage firm reputation

-> AI assists drafting. Human clicks "Approve" and "Submit." Accountability is never hidden.

4. Why real-time progress instead of status reports?

Multiple DDQs at once

Teams need to see all projects simultaneously

Status meetings waste time

Dashboard eliminates "what's the status?" conversations

-> Dashboard shows all DDQs, progress per question, assignees, and blockers. Always current.

AI Boundaries

Where AI Stops

The easy part was deciding where AI helps. The hard part was deciding where it shouldn't.

Fund managers have legal liability for DDQ responses. Investors require human accountability. If AI auto-submitted an incorrect answer, the consequences would be severe—regulatory issues, damaged relationships, potential lawsuits.

The Line I Drew:

Parse incoming questions

Verify parsing is accurate

Match to past answers

Choose which answer to use

Show confidence levels

Decide when to trust the match

Draft suggested text

Edit and finalize wording

Track progress

Own the approval

—

Click submit

Key Design Decision:

When a response moves to "Review" status, AI assistance fades. The final stages are human-only. This keeps accountability visible, not hidden behind automation.

Design Process - Iteration

What didn't work, and why we changed it

V1: AI in Charge, Workflow Scattered

What we built

A home screen that opened directly with an AI agent with the Data Room, project navigation, and answer workspace all living as separate surfaces.

Why it failed

The AI greeted users before they even knew what they were working on. And once they started, the three core actions (finding documents, matching past answers, and writing responses) existed in completely different places. Users had to mentally stitch the workflow together themselves.

Insight

AI should support the workflow, not own the entry point.

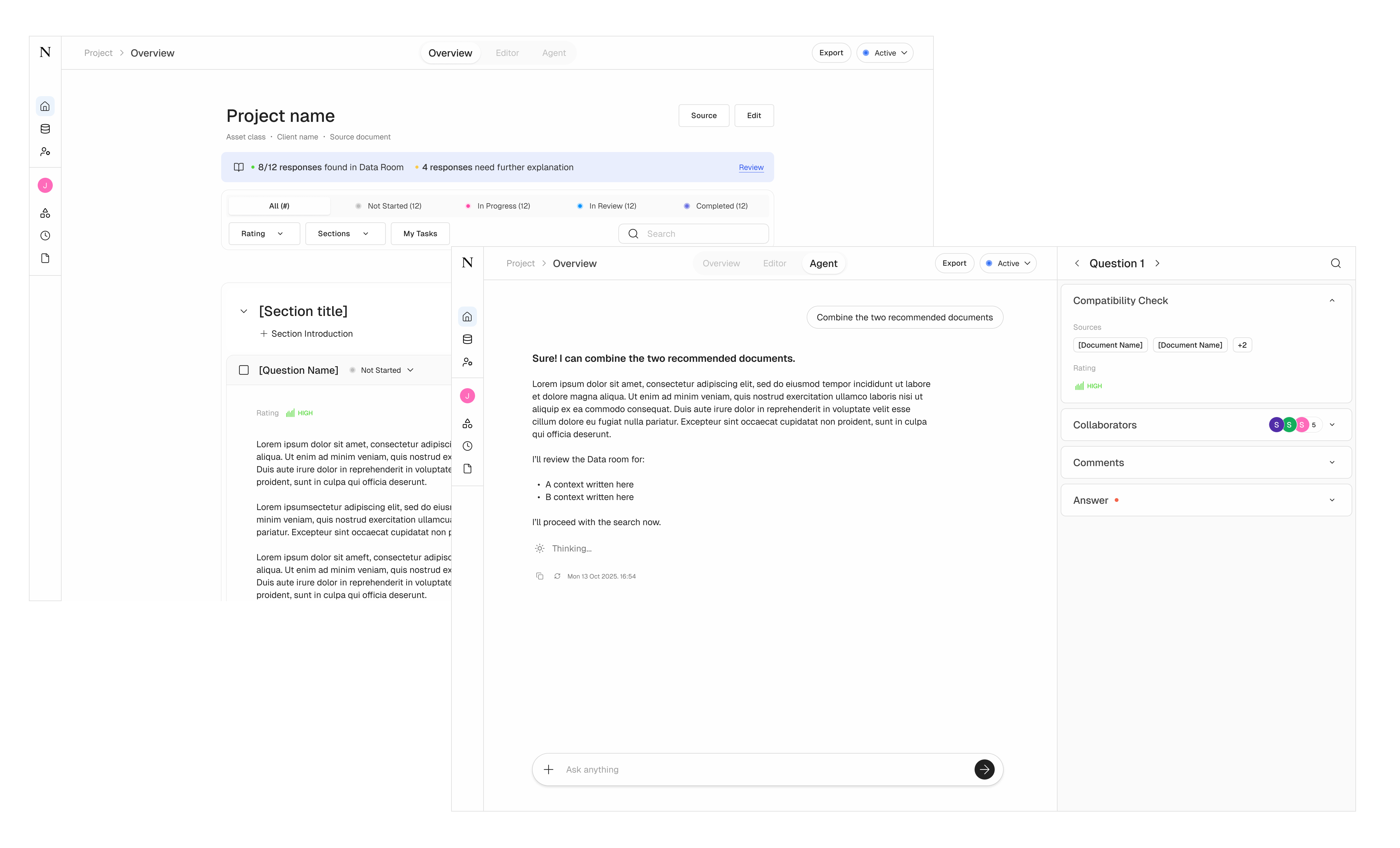

V2: Editor and Agent Living Apart

What we built

A project workspace with status tracking and question overview, plus a separate Agent tab where users could ask AI for help, with collaboration details (sources, rating, collaborators, comments) surfaced inside the agent panel.

Why it failed

Editing a response and talking to the AI were two different screens. Users had to switch tabs mid-thought. And placing collaboration info inside the agent panel meant people were jumping into AI mode just to check who was working on what, a completely different job to be done.

Insight

Don't make users switch contexts to finish one task.

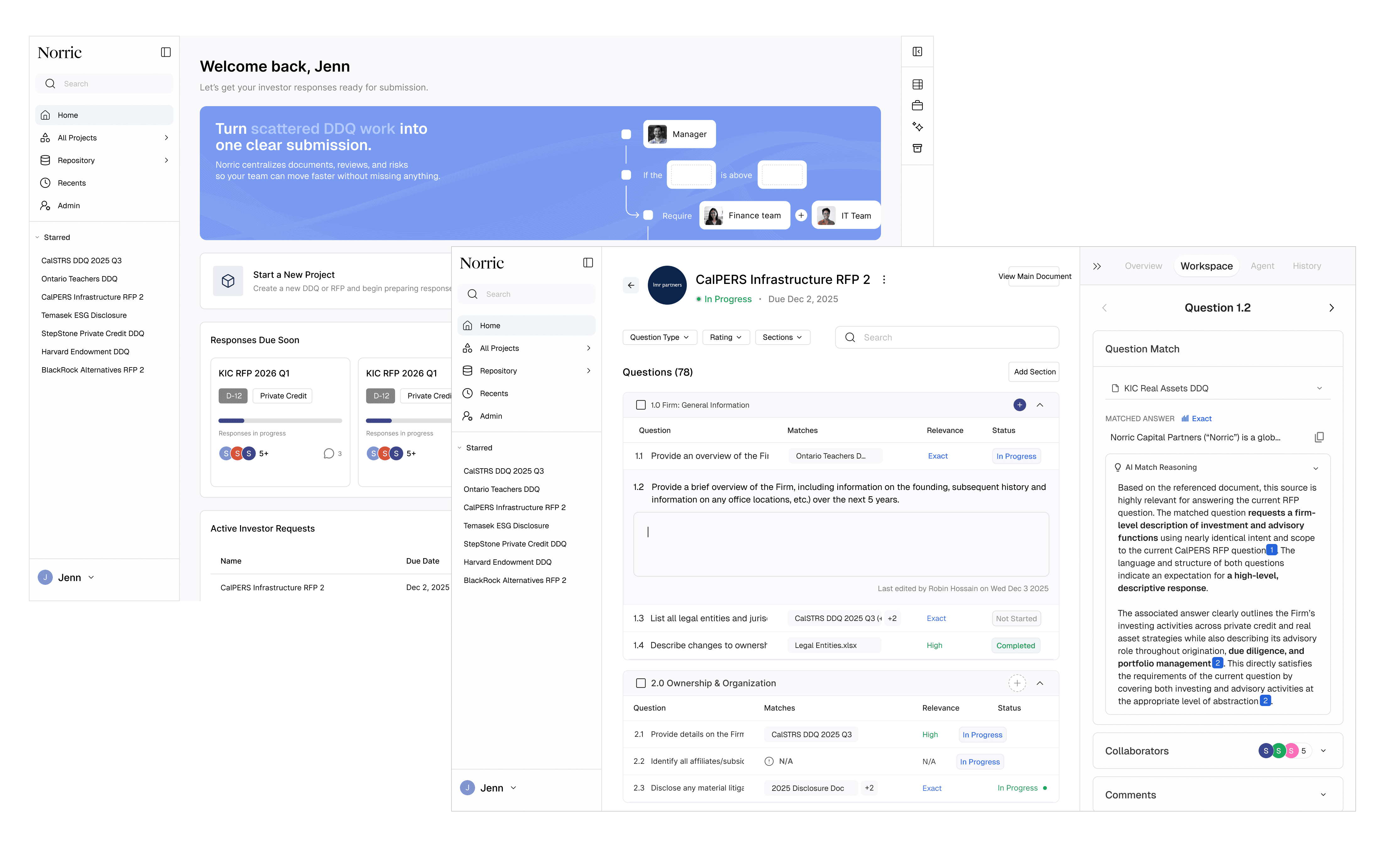

V3 (Final): One Surface, Human in Control

What we built

A unified dashboard showing all active DDQs, due dates, and team progress at a glance, then a single workspace where users write responses with AI match suggestions, source documents, and collaborator status all visible side by side.

Why it worked

Users no longer had to switch surfaces mid-task.

While writing a response, AI surfaces matched answers from the repository, but the user decides whether to use them. The source documents behind each match are visible inline, so trust is built into the flow.

Collaboration status sits in the same panel, so "who's handling this?" is answered without leaving the screen.

Impact

Time to find past answer

30+ min searching

<30 sec with AI match

DDQ completion time

~40 hours

~15 hours (projected)

Questions with reusable matches

Unknown

60% auto-matched

Approval audit trail

Buried in email

Built into system

Progress visibility

Ask via email

Real-time dashboard

Looking back

What I learned from Norric AI.

The real problem hides behind the obvious one

"Add AI" was the brief. "Make knowledge findable" was the actual problem.

Designing boundaries is harder than designing features

Knowing where AI should stop required more thought than where it should help.

Visibility is underrated

A simple progress dashboard eliminated hours of status meetings.